Jakob Paul Zimmermann

I am a master's student in Computer Science at TU Berlin, a researcher in the Discrete Geometry Group at Freie Universität Berlin, and a working student at Fraunhofer HHI in Berlin.

Research

FU Berlin – Discrete Geometry Group

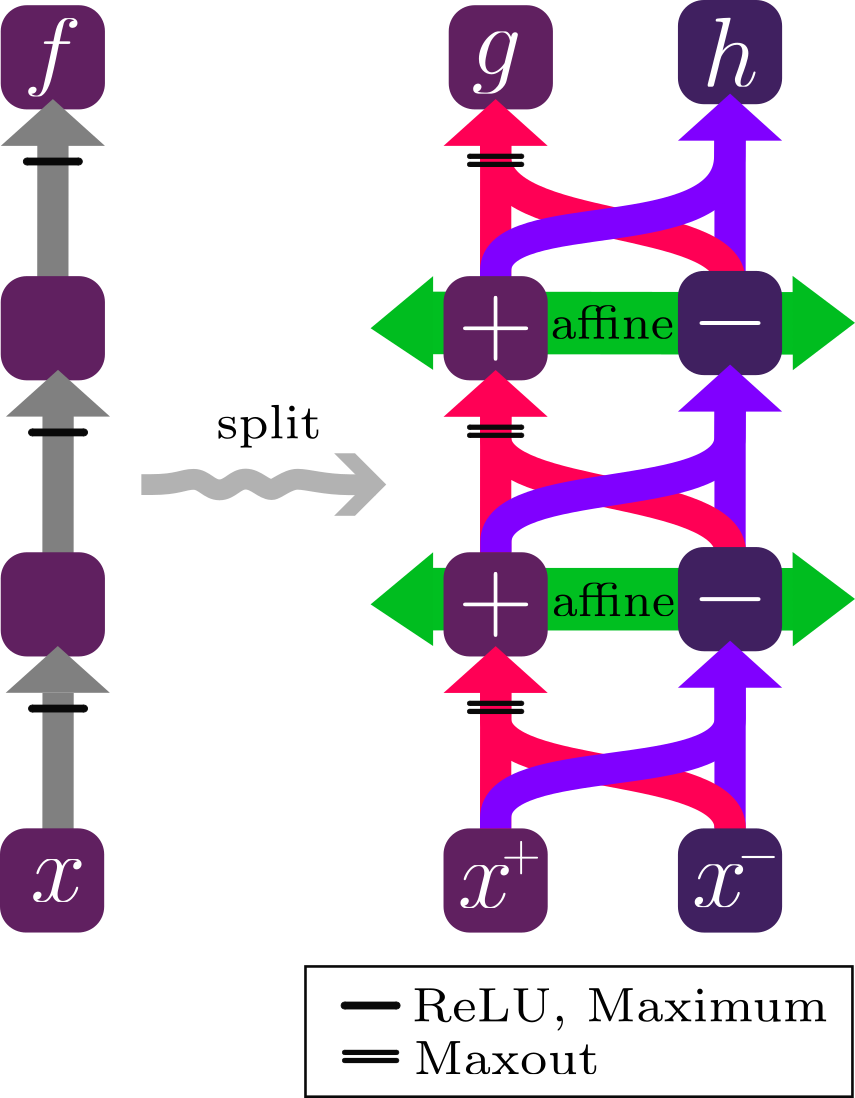

With professor Georg Loho, I work on the mathematical foundations of Deep Learning. We investigate the geometry of the Newton Polytopes of Neural Networks and develop new Explainable AI (XAI) methods using the Difference-of-Convex decomposition of Neural Networks.

Fraunhofer HHI – Berlin

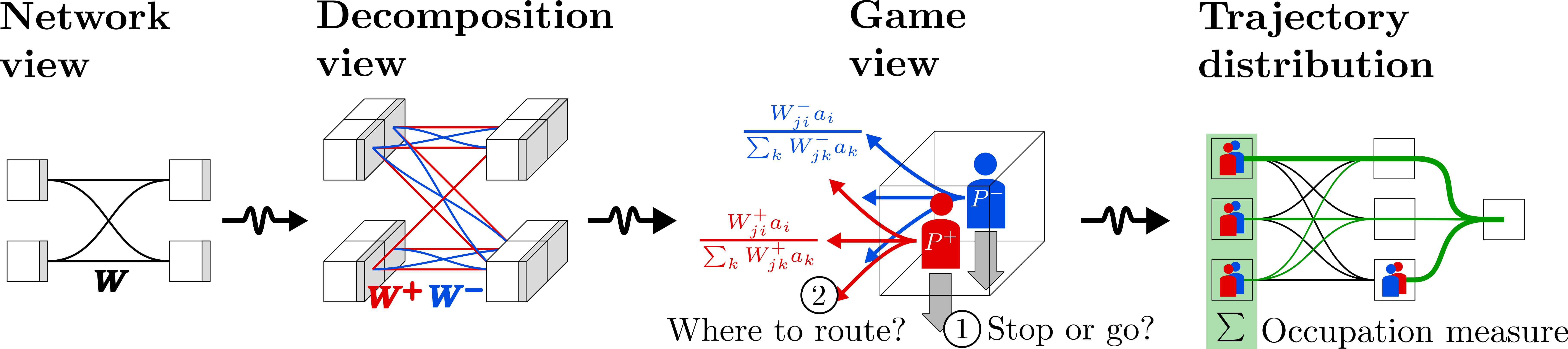

With professor Wojciech Samek I am working on new backpropagation based XAI methods for the Transformer Architecture.

Fraunhofer IOSB – Karlsruhe

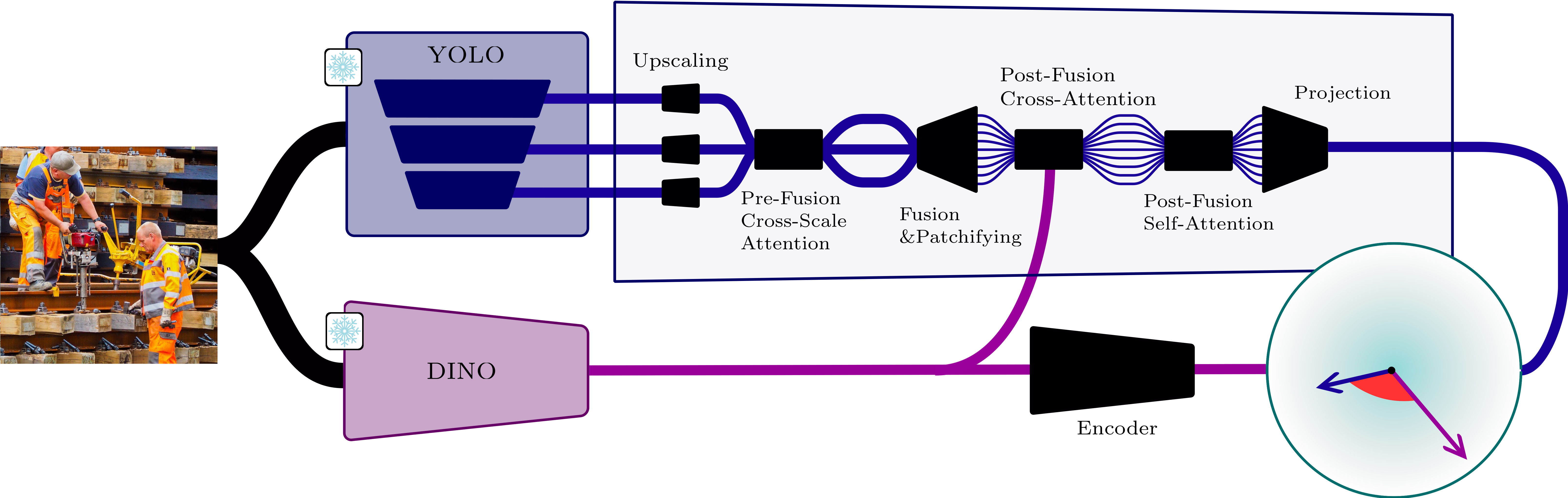

I have worked on runtime monitoring of image recognition models and representation learning. I developed a monitoring approach that is knowledge-guided and works on internal representations of image recognition models and embedding models such as DINO.

Goals

I want to understand neural networks at the level where their computations become mathematically inspectable: as piecewise-linear and geometric objects. My goal is to turn this structure into methods that make modern models more transparent, more monitorable, and more reliable in deployment.

Concretely, I work on three connected questions:

- What structure is hidden inside a trained network? I study decompositions of networks into monotone and convex parts, and reformulations of the backward pass as two-player games on an extended network graph — both expose how positive and negative evidence propagate through a model.

- When can explanations be trusted? I develop attribution methods whose behaviour is tied to the network’s actual computation rather than only to visual plausibility.

- How can models recognise their own failures? I investigate monitoring methods that detect safety-critical perception failures by comparing internal model representations with foundation-model embeddings.

This puts my work at the interface of discrete geometry, explainable AI, and safety: I use Newton polytopes, difference-of-convex decompositions, and two-player games on computational graphs to derive explanations, certificates, and failure signals from the mathematical structure neural networks already have.

Publications

Preprints

Talks & Conferences

Mathematical Interests

Education

I completed my bachelor's degrees in Mathematics and Computer Science at KIT (Karlsruhe Institute of Technology) in Karlsruhe.

My bachelor thesis “Induced Turán problems” was supervised by Maria Axenovich and Thorsten Ueckerdt. The thesis studies extremal problems for induced and biinduced subgraphs, connections to Vapnik–Chervonenkis dimension, and includes a simplified proof of the Erdős–Hajnal conjecture for graphs with bounded VC dimension.